Real-Time Data Integration: Common Challenges

Real-time data integration can transform the way commercial real estate (CRE) firms operate, but it’s not without obstacles. CRE professionals face issues like poor data quality, fragmented systems, and outdated infrastructure, which slow down decision-making and increase costs. Here's a quick breakdown of the main challenges and solutions:

Data Quality Issues: Errors and inconsistencies in data cost businesses millions annually and disrupt automated processes.

High Data Volume: Legacy systems struggle to handle the growing influx of information from IoT devices and other sources.

Latency Problems: Delays in processing data undermine the effectiveness of real-time analytics.

Data Silos: Isolated systems and incompatible formats make integration difficult.

Scalability Limits: Outdated systems fail to keep up with growing portfolios and data demands.

Solutions include tools like CoreCast for centralized data management, Change Data Capture (CDC) for real-time updates, and robust data governance practices to ensure accuracy and consistency. Firms must also choose between batch processing for periodic updates and streaming integration for continuous data flow, balancing cost and speed.

Real-time integration is critical for improving efficiency, reducing errors, and enabling faster decisions in a competitive market. CRE firms can overcome these challenges by investing in modern systems and structured rollout strategies.

Common Challenges in Real-Time Data Integration

Real-time data integration might sound straightforward in theory, but for CRE professionals, it often comes with significant hurdles. Understanding these challenges is key to building systems that enable smarter, faster decisions. At the core of these issues lies the ever-present problem of data quality, which can disrupt every stage of integration.

Poor Data Quality and Inconsistencies

Bad data isn't just an inconvenience - it’s a costly problem. In fact, inaccurate data costs organizations between $12.9 million and $15 million annually[3]. For CRE firms, this issue is amplified when data is scattered across disconnected platforms like accounting, leasing, and operations systems. This fragmentation creates multiple "truths", forcing teams to spend valuable time reconciling discrepancies manually. Adding to the chaos, manual data entry introduces errors - like typos or inconsistent naming conventions (think "123 Main St." versus "123 Main Street") - that disrupt automated processes. These human errors cost U.S. businesses a staggering $3.1 trillion annually[1].

As Stephanie O'Neill from JLLT puts it:

"A data-driven decision is only as good as the data behind it. If you rely on poor-quality data, then poor-quality decisions are likely to follow"[3].

The good news? Tools like digital reporting platforms with standardized templates can cut data entry errors by up to 95%[1]. But even with cleaner data, other challenges loom, especially as data volume and speed continue to grow.

High Data Volume and Velocity

Modern CRE operations churn out massive amounts of data from IoT devices, transaction feeds, and market updates. By 2025, global data volume is expected to hit a jaw-dropping 175 zettabytes[5]. Unfortunately, many on-premises systems simply can’t handle this influx in real time, leading to delays and even system crashes.

Manual workflows only make things worse. Around 80% of asset management professionals lose about 30 minutes daily just retrieving investment data[1]. This not only wastes time but also increases the risk of errors and outdated information. Meanwhile, firms often pour resources into maintaining outdated systems, with nearly 40% of IT budgets consumed by technical debt[3], as they try to force high-speed, high-volume data into systems designed for slower, batch processing.

Latency and Performance Bottlenecks

Real-time analytics rely on fast, seamless data movement. But when there are delays - whether due to on-premises systems, cloud environments, or hybrid setups - data quickly becomes outdated. This can be disastrous for time-sensitive tasks like updating investor dashboards or operational reports. Performance issues often stem from poorly allocated resources, unoptimized data pipelines, or systems originally built for batch processing rather than continuous data streams. These delays undermine the very promise of real-time integration: timely, accurate insights.

Data Silos and Incompatible Formats

One of the biggest hurdles in CRE is the fragmentation of data across isolated systems. Financial records, tenant information, and property operations often reside in separate platforms, making integration a nightmare. A striking 90% of large enterprises still rely on legacy systems for critical operations, yet 75% report major difficulties integrating them due to outdated APIs and limited connectivity[3].

Off-the-shelf CRE CRMs often come with rigid data schemas and sluggish APIs, lacking the webhook functionality needed for instant data retrieval. This forces analysts to manually research each property - a process that takes roughly 20 minutes per property and carries a high risk of errors[4]. On top of that, incompatible file formats require extensive data conversion, adding yet another layer of complexity. It’s no wonder that 34.43% of professionals identify interoperability with legacy systems as their top integration challenge[3].

Scalability and Legacy System Constraints

As CRE portfolios grow and new data sources emerge, outdated infrastructure often struggles to keep up. Legacy systems frequently hit their limits, requiring costly hardware upgrades to handle increasing data demands. Around 30% of CIOs report spending over 20% of their budgets just to address legacy integration issues[3]. When these systems fail to scale, teams often fall back on manual processes, which only deepen inefficiencies and hinder the real-time visibility needed for effective portfolio management and investor reporting.

Solutions for Real-Time Data Integration in CRE

Solving real-time data integration challenges in the commercial real estate (CRE) sector requires targeted, modern approaches. These solutions focus on improving data quality, minimizing delays, and ensuring scalability, sidestepping the inefficiencies of outdated systems. Let’s explore a few key strategies.

Using CoreCast for Data Integration

CoreCast is a platform designed to bring multiple property management, financial, and market systems together under one interface. It simplifies processes by automatically parsing documents like rent rolls and operating statements, instantly updating financial models and dashboards. Its live-linked portfolio structure ensures that any property-level updates trigger immediate, comprehensive changes across the portfolio. Additionally, CoreCast includes built-in validation tools to flag potential market anomalies, such as unusual cap rates or rent growth patterns, helping to prevent errors. CoreCast is built to grow with your portfolio - no expensive hardware upgrades required. While CoreCast is currently designed for Multifamily acquisitions, we invite you to talk with us for other potential use cases and help us grow the platform together!

Adopting Change Data Capture (CDC) Techniques

Change Data Capture (CDC) is a method that focuses on capturing only the data that has changed, rather than reprocessing entire datasets. This significantly reduces delays by synchronizing updates across systems in near real time. For instance, whether it’s a lease renewal, rent payment, or an update to operating expenses, CDC ensures your dashboards are always up-to-date. At the same time, it minimizes the computational strain on your infrastructure, making it an efficient solution for keeping information current.

Establishing Data Governance Practices

Technology alone isn’t enough - strong governance is critical for maintaining data accuracy and consistency. A robust data governance framework includes standardized validation checkpoints, mapping key fields between different systems, and documenting transformation rules. This process ensures consistency and reliability across platforms[3].

Key components of effective governance include:

Assigning team members to oversee data quality.

Implementing role-based access controls to prevent unauthorized changes.

Keeping detailed records of data sources and any known issues.

Collaboration across departments like legal, finance, operations, and IT is crucial for ensuring compliance with regulations and maintaining technical accuracy. Regular training in data literacy also helps reduce human errors. By establishing clear rules for data retention, update schedules, and resolving conflicts, your organization can ensure reliable, real-time integration that supports timely and accurate decision-making.

Batch Processing vs. Streaming Integration

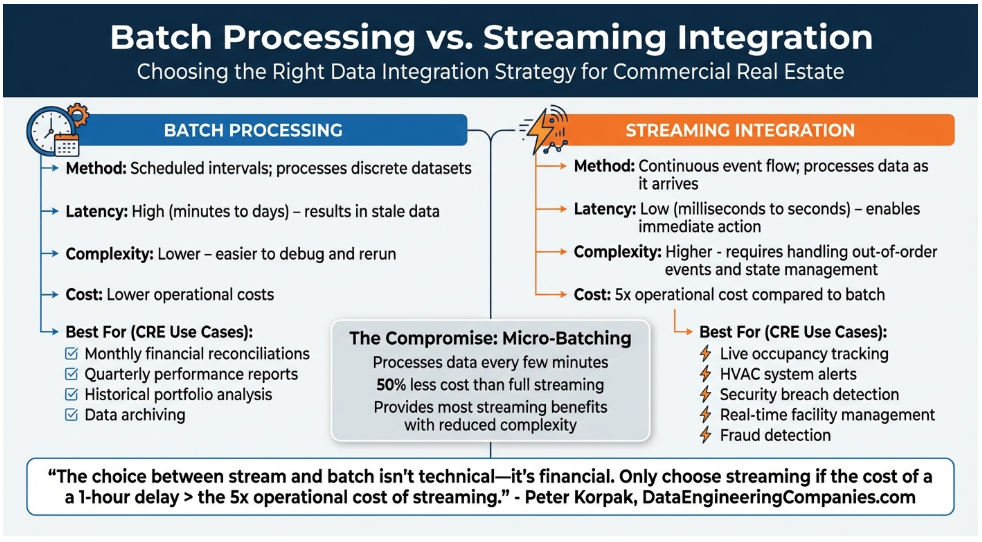

After tackling data quality and latency issues, CRE firms face another critical decision: selecting the right integration strategy. Choosing between batch processing and streaming integration is essential for aligning technology with business needs and priorities.

These two methods differ significantly in how they handle data. Batch processing works by collecting and processing data in scheduled intervals, such as hourly or nightly. In contrast, streaming integration processes data continuously as it arrives, providing updates almost instantly[8].

This choice isn't just about technology - it has financial implications. As Peter Korpak, Chief Analyst & Founder of DataEngineeringCompanies.com, explains:

"The choice between stream and batch isn't technical - it's financial. Only choose streaming if the cost of a 1-hour delay > the 5x operational cost of streaming." [10]

Batch processing is simpler to set up and troubleshoot, making it suitable for tasks where accuracy takes precedence over speed. For example, it's ideal for monthly financial reconciliations or quarterly performance reports. It’s also more budget-friendly for non-urgent workloads since computing resources are only used during scheduled runs. However, failed jobs can be cumbersome, often requiring the reprocessing of entire datasets[9].

On the other hand, streaming integration shines in scenarios where delays can lead to financial losses or increased risk. Real-time applications like occupancy tracking, HVAC system alerts, and security breach detection rely on immediate data processing to function effectively[11]. However, this method comes with higher costs and complexity, requiring always-on infrastructure and advanced engineering to manage challenges like out-of-order events and stateful processing[10].

For many CRE firms, micro-batching offers a practical compromise. By processing data every few minutes, it provides most of the benefits of streaming while significantly reducing costs and complexity - around 50% less than full-scale streaming[10].

Conclusion

Real-time data integration is reshaping the way commercial real estate (CRE) operates. The numbers speak for themselves: poor data quality costs organizations between $12.9 million and $15 million annually [3], while fragmented systems force professionals to spend a staggering 80% of their time preparing data instead of analyzing it for insights [3]. On top of that, legacy systems, security concerns, and integration strategy hurdles make modernization even tougher.

However, there’s a silver lining. Centralized data automation can increase operational efficiency by up to 70% [1] and slash reporting time in half [1]. One global financial institution demonstrated this by cutting $150 million - one-third of its $450 million property management budget - through vendor consolidation and process standardization made possible by integration [3].

To overcome legacy challenges, a structured rollout is key. Start small with a phased integration strategy: map existing data silos, pilot integrations, and gradually scale up. Ensuring clear data ownership and governance practices will help maintain quality as you expand. When choosing an integration strategy, prioritize your business goals rather than focusing solely on technical features.

For those looking for a practical solution, CoreCast offers a centralized command center that brings property management systems, financial tools, and market data together into one unified platform [3][6]. Currently available in beta for $50 per user per month, CoreCast automates data ingestion and provides real-time performance dashboards [1][2]. For firms needing deeper financial analysis, The Fractional Analyst offers expert services starting at $95 per hour [1].

As CRE transitions toward institutional-grade infrastructure, robust data governance is no longer optional - it’s essential for attracting capital [6]. Tackling integration challenges today means faster decision-making, fewer mistakes, and improved transparency for investors.

FAQs

-

Streaming updates are ideal when you need real-time processing. Think fraud detection, live dashboards, or instant personalization - these systems handle data continuously and respond in milliseconds to seconds. On the other hand, batch updates work well for tasks like generating historical reports or managing periodic data loads, where speed isn't as critical, and keeping costs lower takes priority. If your needs fall somewhere in between, hybrid architectures can blend both methods to meet diverse requirements efficiently.

-

The fastest way to address poor CRE data quality is by focusing on thorough data cleaning and validation. This involves tackling problems such as duplicates, inconsistencies, and inaccuracies head-on. By standardizing and validating your data, you can ensure it delivers reliable insights while minimizing errors during integration.

To make the process smoother and more effective, it's important to prioritize proper planning, consistent validation, and detailed documentation. These steps not only improve the quality of your data but also help streamline the entire process.

-

To link legacy systems with modern platforms without starting from scratch, APIs and integration tools can bridge the gap, allowing smooth communication between old and new technologies. Implementing multi-tenant integration management helps improve compatibility across systems while minimizing the accumulation of technical debt. Additionally, automating data standardization processes and leveraging centralized platforms ensures consistent data handling and boosts operational efficiency - all without the need for a full system rebuild.